Validate New Features

with Live Runtime Behavior

Confirm how new code executes across services, data, and dependencies before full rollout.

Launch features proven in running environments

New code interacts with real data, real traffic, and real dependencies. Validation must happen in the environment where the code runs.

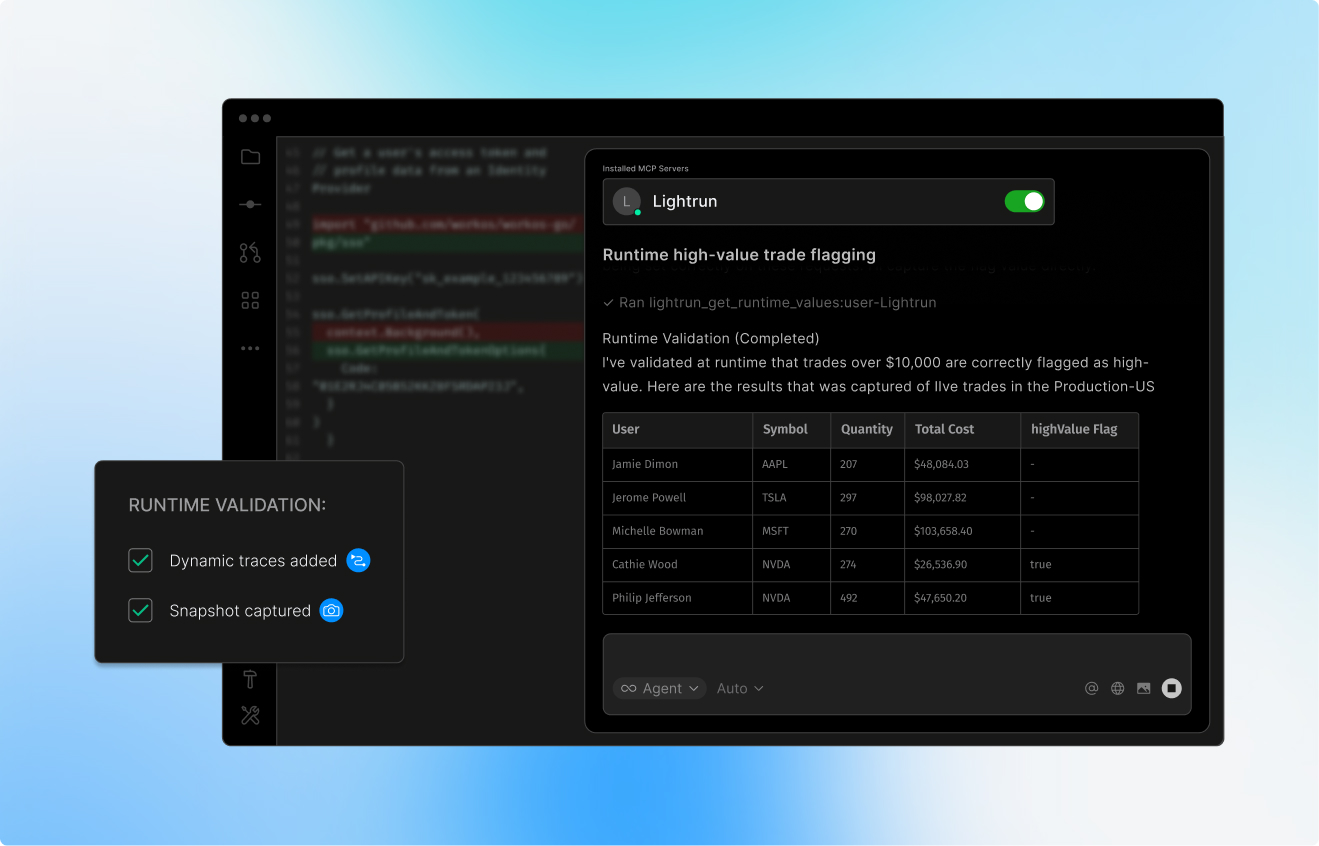

Trace new execution paths

See how new branches, flags, and inputs execute under live execution conditions.

Measure downstream impact

Observe how changes affect APIs, databases, and third-party services.

Confirm system stability

before exposure expands

Validate latency, error rates, and side effects before scaling rollout.

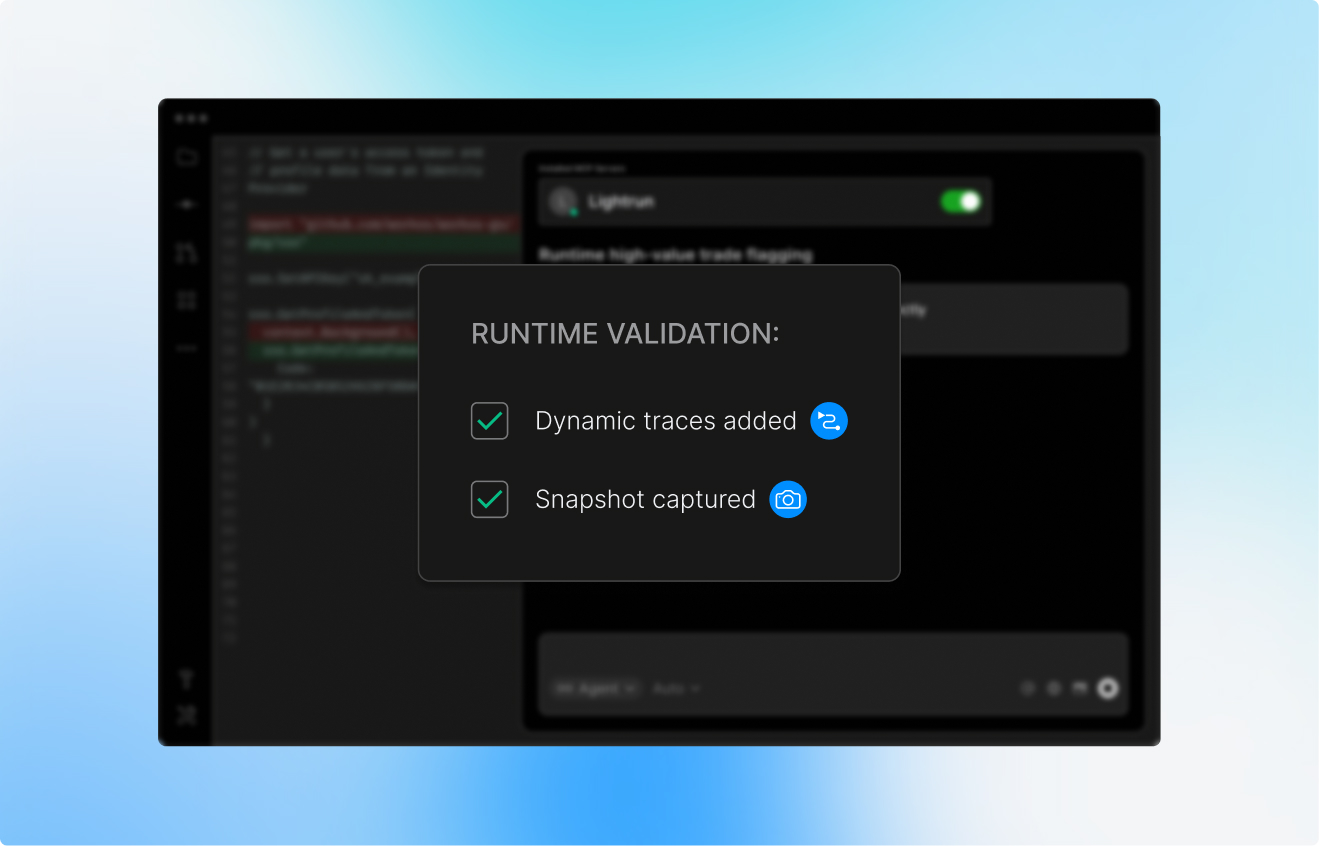

How Lightrun verifies features safely

Use dynamic instrumentation and a read-only sandbox to test new code safely across production environments.

Instrument code

at the point of change

Add dynamic logs, traces, metrics, or snapshots exactly where new code runs. No rebuilds. No redeploys

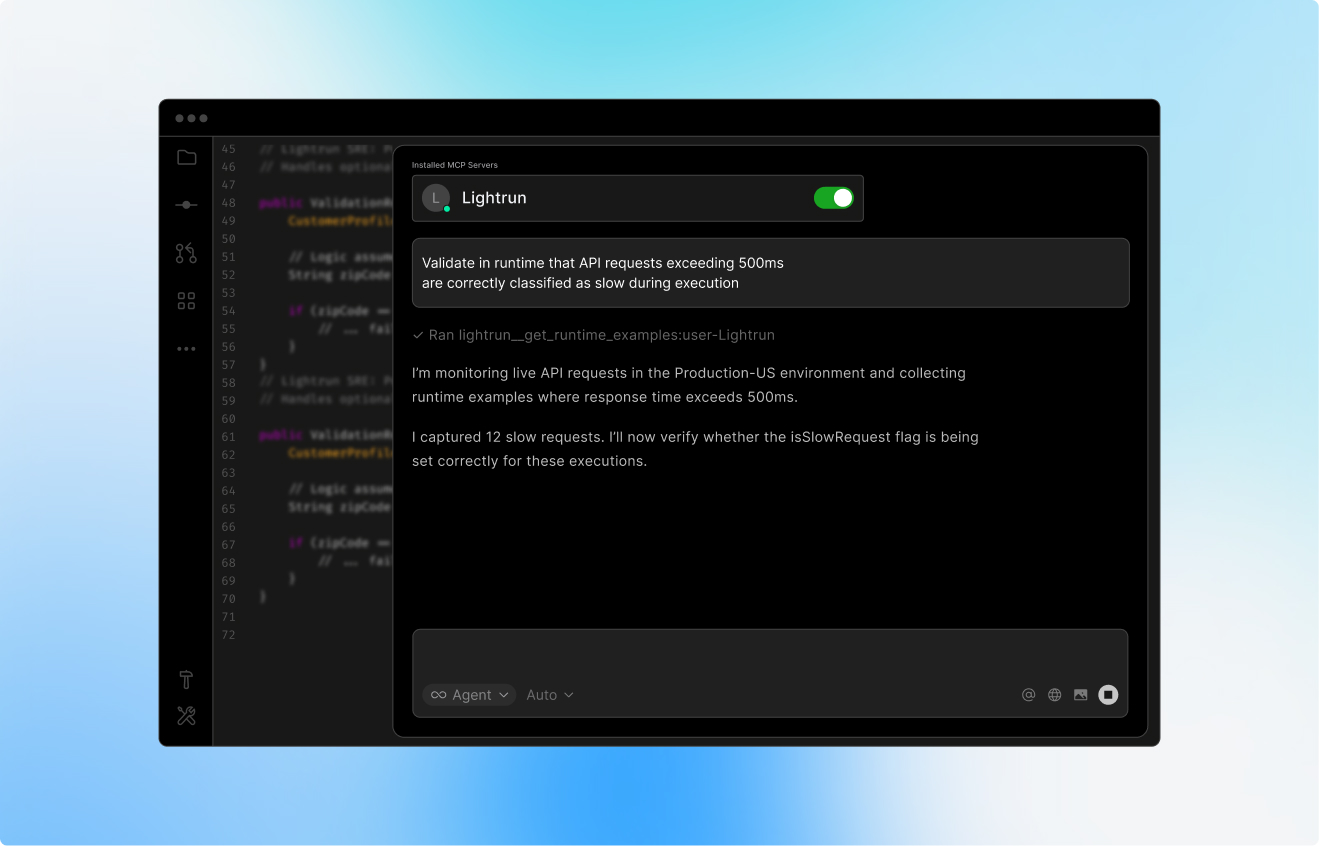

Observe execution

under real traffic

See how new code paths, feature flags, and downstream calls behave under real production load.

Validate code in an

isolated sandbox

All testing runs inside a permission-controlled, read-only remote environment that prevents code mutation.

Why AI-native reliability requires runtime context

AI-native engineering is only as reliable as the runtime truth it consumes.

Bridge the verification bottleneck

Prove that code behaves correctly under real traffic conditions with runtime context

Validate AI-generated code safely

Ensure AI-written logic executes correctly by observing live branch decisions and variables without triggering a rebuild cycle

Release changes with confidence

Identify hidden regressions and validate remediation safely in a read-only sandbox before expanding feature exposure